- Introduction and overview

- What is qualitative research?

- What is qualitative data?

- Examples of qualitative data

- Qualitative vs. quantitative research

- Mixed methods

- Qualitative research preparation

- Theoretical perspective

- Theoretical framework

- Literature reviews

- Research question

- Conceptual framework

- Conceptual vs. theoretical framework

- Data collection

- Qualitative research methods

- Interviews

- Focus groups

- Observational research

- Case studies

- Surveys

- Ethnographical research

- Ethical considerations

- Confidentiality and privacy

- Bias

- Power dynamics

- Reflexivity

- How to cite "The Ultimate Guide to Qualitative Research - Part 1: The Basics"

Data collection - What is it and why is it important?

The data collected for your study informs the analysis of your research. Gathering data in a transparent and thorough manner informs the rest of your research and makes it persuasive to your audience.

We will look at the data collection process, the methods of data collection that exist in quantitative and qualitative research, and the various issues around data in qualitative research.

Data in research

When it comes to defining data, data can be any sort of information that people use to better understand the world around them. Having this information allows us to robustly draw and verify conclusions, as opposed to relying on blind guesses or thought exercises.

Necessity of data collection skills

Collecting data is critical to the fundamental objective of research as a vehicle to organize knowledge. While this may seem intuitive, it's important to acknowledge that researchers must be as skilled in data collection as they are in data analysis.

Collecting the right data

Rather than just collecting as much data as possible, it's important to collect data that is relevant for answering your research question. Imagine a simple research question: what factors do people consider when buying a car? It would not be possible to ask every living person about their car purchases. Even if it was possible, not everyone drives a car, so asking non-drivers seems unproductive. As a result, the researcher conducting a study to devise data reports and marketing strategies has to take a sample of the relevant data to ensure reliable analysis and findings.

Data collection examples

In the broadest terms, any sort of data gathering contributes to the research process. In any work of science, researchers cannot make empirical conclusions without relying on some body of data to make rational judgments.

Various examples of data collection in the social sciences include:

- responses to a survey about product satisfaction

- interviews with students about their career goals

- reactions to an experimental vitamin supplement regimen

- observations of workplace interactions and practices

- focus group data about customer behavior

Data science and scholarly research have almost limitless possibilities to collect data, and the primary requirement is that the dataset should be relevant to the research question and clearly defined. Researchers thus need to rule out any irrelevant data so that they can develop new theory or key findings.

Types of data

Researchers can collect data themselves (primary data) or use third-party data (secondary data). The data collection considerations regarding which type of data to work with have a direct relationship to your research question and objectives.

Primary data

Original research relies on first-party data, or primary data that the researcher collects themselves for their own analysis. When you are collecting information in a primary study yourself, you are more likely to gain the high quality you require.

Because the researcher is most aware of the inquiry they want to conduct and has tailored the research process to their inquiry, first-party data collection has the greatest potential for congruence between the data collected and the potential to generate relevant insights.

Ethnographic research, for example, relies on first-party data collection since a description of a culture or a group of people is contextualized through a comprehensive understanding of the researcher and their relative positioning to that culture.

Secondary data

Researchers can also use publicly available secondary data that other researchers have generated to analyze following a different approach and thus produce new insights. Online databases and literature reviews are good examples where researchers can find existing data to conduct research on a previously unexplored inquiry. However, it is important to consider data accuracy or relevance when using third-party data, given that the researcher can only conduct limited quality control of data that has already been collected.

Big data

A relatively new consideration in data collection and data analysis has been the advent of big data, where data scientists employ automated processes to collect data in large amounts.

The advantage of collecting data at scale is that a thorough analysis of a greater scope of data can potentially generate more generalizable findings. Nonetheless, this is a daunting task because it is time-consuming and arduous. Moreover, it requires skilled data scientists to sift through large data sets to filter out irrelevant data and generate useful insights. On the other hand, it is important for qualitative researchers to carefully consider their needs for data breadth versus depth: Qualitative studies typically rely on a relatively small number of participants but very detailed data is collected for each participant, because understanding the specific context and individual interpretations or experiences is often of central importance. When using big data, this depth of data is usually replaced with a greater breadth of data that includes a much greater number of participants. Researchers need to consider their need for depth or breadth to decide which data collection method is best suited to answer their research question.

Data collection methods

Different data collection procedures for gathering data exist depending on the research inquiry you want to conduct. Let's explore the common data collection methods in quantitative and qualitative research.

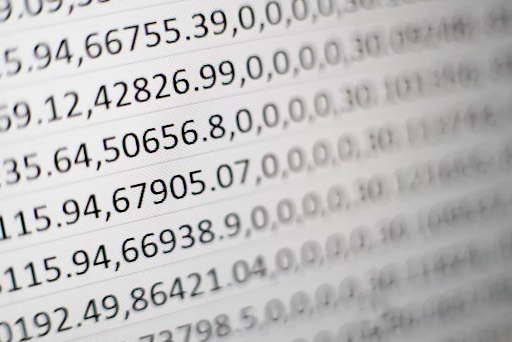

Quantitative data collection methods

Quantitative methods are used to collect numerical or quantifiable data. These can then be processed statistically to test hypotheses and gain insights. Quantitative data gathering is typically aimed at measuring a particular phenomenon (e.g., the amount of awareness a brand has in the market, the efficacy of a particular diet, etc.) in order to test hypotheses (e.g., social media marketing campaigns increase brand awareness, eating more fruits and vegetables leads to better physical performance, etc.).

Some qualitative methods of research can contribute to quantitative data collection and analysis. Online surveys and questionnaires with multiple-choice questions can produce structured data ready to be analyzed. A survey platform like Qualtrics, for example, aggregates survey responses in a spreadsheet to allow for numerical or frequency analysis.

Qualitative data collection methods

Analyzing qualitative data is important for describing a phenomenon (e.g., the requirements for good teaching practices), which may lead to the creation of propositions or the development of a theory. Behavioral data, transactional data, and data from social media monitoring are examples of different forms of data that can be collected qualitatively.

Consideration of tools or equipment for collecting data is also important. Primary data collection methods in observational research, for example, employ tools such as audio and video recorders, notebooks for writing field notes, and cameras for taking photographs. As long as the products of such tools can be analyzed, those products can be incorporated into a study's data collection.

Employing multiple data collection methods

Moreover, qualitative researchers seldom rely on one data collection method alone. Ethnographic researchers, in particular, can incorporate direct observation, interviews, focus group sessions, and document collection in their data collection process to produce the most contextualized data for their research. Mixed methods research employs multiple data collection methods, including qualitative and quantitative data, along with multiple tools to study a phenomenon from as many different angles as possible.

New forms of data collection

External data sources such as social media data and big data have also gained contemporary focus as social trends change and new research questions emerge. This has prompted the creation of novel data collection methods in research.

Ultimately, there are countless data collection instruments used for qualitative methods, but the key objective is to be able to produce relevant data that can be systematically analyzed. As a result, researchers can analyze audio, video, images, and other formats beyond text. As our world is continuously changing, for example, with the growing prominence of generative artificial intelligence and social media, researchers will undoubtedly bring forth new inquiries that require continuous innovation and adaptation with data collection methods.

Challenges in data collection

Collecting data for qualitative research is a complex process that often comes with unique challenges. This section discusses some of the common obstacles that researchers may encounter during data collection and offers strategies to navigate these issues.

Access to participants

Obtaining access to research participants can be a significant challenge. This might be due to geographical distance, time constraints, or reluctance from potential participants. To address this, researchers need to clearly communicate the purpose of their study, ensure confidentiality, and be flexible with their scheduling.

Cultural and language barriers

Researchers may face cultural and language barriers, particularly in cross-cultural research. These barriers can affect communication and understanding between the researcher and the participant. Employing translators, cultural mediators, or learning the local language can be beneficial in overcoming these barriers.

Non-responsive or uncooperative participants

At times, researchers might encounter participants who are unwilling or unable to provide the required information. In these situations, the researcher should aim to build trust, create a comfortable environment for the participant, and reassure them about the confidentiality of their responses.

Time constraints

Qualitative research can be time-consuming, particularly when involving interviews or focus groups that require coordination of multiple schedules, transcription, and in-depth analysis. Adequate planning and organization can help mitigate this challenge.

Bias in data collection

Bias in data collection can occur when the researcher's preconceptions or the participant's desire to present themselves favorably affect the data. Strategies for mitigating bias include reflexivity, triangulation, and member checking.

Handling sensitive topics

Research involving sensitive topics can be challenging for both the researcher and the participant. Ensuring a safe and supportive environment, practicing empathetic listening, and providing resources for emotional support can help navigate these sensitive issues.

Collecting data in qualitative research can be a very rewarding but challenging experience. However, with careful planning, ethical conduct, and a flexible approach, researchers can effectively navigate these obstacles and collect robust, meaningful data.

Considerations when collecting data

Research relies on empiricism and credibility at all stages of a research inquiry. As a result, there are various data collection problems and issues that researchers need to keep in mind.

Data quality issues

Your analysis may depend on capturing the fine-grained details that some data collection tools may miss. In that case, you should carefully consider data quality issues regarding the precision of your data collection. For example, think about a picture taken with a smartphone camera and a picture taken with a professional camera. If you need high-resolution photos, it would make sense to rely on a professional camera that can provide adequate data quality.

Quantitative data collection often relies on precise data collection tools to evaluate outcomes, but researchers collecting qualitative data should also be concerned with quality assurance. For example, suppose a study involving direct observation requires multiple observers in different contexts. In that case, researchers should take care to ensure that all observers can gather data in a similar fashion to ensure that all data can be analyzed in the same way.

Data quality is a key consideration when gathering information. Even if the researcher has chosen an appropriate method for data collection, is the data that they collect useful and detailed enough to provide the necessary analysis to answer the given research inquiry?

One example where data quality is consequential in qualitative data collection includes interviews and focus groups. Recordings may lose some of the finer details of social interaction, such as pauses, thinking words, or utterances that aren't loud enough for the microphone to pick up.

Suppose you are conducting an interview for a study where such details are relevant to your analysis. In that case, you should consider employing tools that collect sufficiently rich data to record these aspects of interaction.

Data integrity

The possibility of inaccurate data has the potential to confound the data analysis process, as drawing conclusions or making decisions becomes difficult, if not impossible, with low-quality data. Failure to establish the integrity of data collection can cast doubt on the findings of a given study.

Accurate data collection is just one aspect researchers should consider to protect data integrity. After that, it is a matter of preserving the data after data collection. How is the data stored? Who has access to the collected data? To what extent can the data be changed between data collection and research dissemination?

Data integrity is an issue of research ethics as well as research credibility. The researcher needs to establish that the data presented for research dissemination is an accurate representation of the phenomenon under study.

Imagine if a photograph of wildlife becomes so aged that the color becomes distorted over time. Suppose the findings depend on describing the colors of a particular animal or plant. In that case, then not preserving the integrity of the data presents a serious threat to the credibility of the research and the researcher. In addition, when transcribing an interview or focus group, it is important to take care that participants’ words are accurately transcribed to avoid unintentionally changing the data.

Transparency

As explored earlier, researchers rely on both intuition and data to make interpretations about the world. As a result, researchers have an obligation to explain how they collected data and describe their data so that audiences can also understand it. Establishing research transparency also allows other researchers to examine a study and determine if they find it credible and how they can continue to build off it.

To address this need, research papers typically have a methodology section, which includes descriptions of the tools employed for data collection and the breadth and depth of the data that is collected for the study. It is important to transparently convey every aspect of the data collection and analysis, which might involve providing a sample of the questions participants were asked, demographic information about participants, or proof of compliance with ethical standards, to name a few examples.

Subjectivity

How to gather data is also a key concern, especially in social sciences where people's perspectives represent the collected data, and these perspectives can vastly differ.

In interviews and focus groups, how questions are framed may change the nature of the answers that participants provide. In market research, researchers have to carefully design questions to not inadvertently lead customers to provide a certain response or to facilitate useful feedback. Even in the natural sciences, researchers have to regularly check whether the data collection equipment they use for gathering data is producing accurate data sets for analysis.

Finally, the different methods of data collection raise questions about whether the data says what we think it says. Consider how people might establish monitoring systems to track behavioral data online. When a user spends a certain amount of time on a mobile app, are they deeply engaged in using the app, or are they leaving it on while they work on other tasks?

Data collection is only as useful as the extent to which the resulting data can be systematically analyzed and is relevant to the research inquiry being pursued. While it is tempting to collect as much data as possible, it is the researcher’s analyses and inferences, not just the quantity of data, that ultimately determine the impact of the research.

Validity and reliability in qualitative data

Ensuring validity and reliability in qualitative data collection is paramount to producing meaningful, rigorous, and trustworthy research findings. This section will outline the core principles of validity and reliability, which stem from quantitative research, and then we will consider relevant quality criteria for qualitative research.

Understanding validity

In general terms, validity is about ensuring that the research accurately reflects the phenomena it purports to represent. It is tied to how well the methods and techniques used in a study align with the intended research question and how accurately the findings represent the participants' experiences or perceptions. In qualitative research, however, the co-existence of multiple realities is often recognized, rather than believing there is only one “true” reality out there that can be measured. Thus, qualitative researchers can instead convey credibility by transparently communicating their research question, operationalization of key concepts, and how this translated into their data collection instruments and analysis. Moreover, qualitative researchers should pay attention to whether their own preconceptions or goals might be inadvertently shaping their findings. In addition, potential reactivity effects can be considered, to assess how the research may have influenced their participants or research setting while collecting data.

Understanding reliability

Reliability broadly refers to the consistency of the research approach across different contexts and with different researchers. A quantitative study is considered reliable if its findings can be replicated in a similar context or if the same results can be obtained by a different researcher following the same research procedure.

In qualitative research, however, researchers acknowledge and embrace the specific context of their data and analysis. All knowledge that is generated is context-specific, so rather than claiming that a study’s findings can be reliably reproduced in a wholly different context, qualitative researchers aim to demonstrate the trustworthiness or dependability of their data and findings. Transparent descriptions and clear communication can convey to audiences that the research was conducted with rigor and coherence between the research question, methods, and findings, all of which can bolster the credibility of the qualitative study.

Enhancing data quality

Various strategies can be used to enhance data quality in qualitative research. Among them are:

1. Triangulation: This involves using multiple data sources, methods, or researchers to gather data about the same phenomenon. This can help to ensure the findings are robust and not dependent on a single source.

2. Member checking: This method involves returning the findings to the participants to check if the interpretations accurately reflect their experiences or perceptions. This can help to ensure the validity of the research findings.

3. Thick description: Providing detailed accounts of the context, interactions, and interpretations in the research report can allow others to understand the research process better, which is important to foster the communicability of one’s research.

4. Audit trail: Keeping a detailed record of the research process, decisions, and reflections can increase the transparency and coherence of the study.

Using technology in data collection

A wide variety of technologies can be used to work with qualitative data. Technology not only aids in data collection but also in the organization, analysis, and presentation of data.

This section explores some of the key ways that technology can be integrated into qualitative data collection.

Digital tools for data collection

Digital tools can vastly improve the efficiency and effectiveness of data collection. For example, audio and video recording devices can capture interviews, focus groups, and observational data with great detail.

Online surveys and questionnaires can reach a wider audience, often at a lower cost and with quicker turnaround times compared with traditional methods. Mobile applications can also be used to capture real-time experiences, emotions, and activities through diary studies or experience sampling.

Online platforms for qualitative research

Online platforms like social media, blogs, and discussion forums provide a rich source of qualitative data. Researchers can analyze these platforms for insights into people's behaviors, attitudes, and experiences.

In addition, virtual communities and digital ethnography are becoming increasingly common as researchers explore these online spaces.

Ethical considerations with technology

With the increased use of technology, researchers must be mindful of ethical considerations, including privacy and consent. It's important to secure informed consent when collecting data from online platforms or using digital tools, and all researchers should obtain the necessary approvals for collecting data and adhering to any applicable codes of conduct (such as GDPR). It's also key to ensure data security and confidentiality when storing data on digital platforms.

Advantages and limitations of technology

While technology offers numerous advantages in terms of efficiency, accessibility, and breadth of data, it also presents limitations. For example, digital tools may not capture the full nuance and richness of face-to-face interactions.

Furthermore, technological glitches and data loss are potential risks. Therefore, it's important for researchers to understand these trade-offs when incorporating technology into their data collection process.

As technology continues to evolve, so too will its applications in qualitative research. Embracing these technological advancements can help researchers to enhance their data collection practices, offering new opportunities for capturing, analyzing, and presenting qualitative data.

Data organization

Data analysis after collecting data is only possible if the data is sufficiently organized into a form that can be easily sorted and understood. Imagine collecting social media data, which could be millions of posts from millions of social media users every day. You can dump every single post into a file, but how can you make sense of it?

Data organization is especially important when dealing with unstructured data. The researcher needs to structure the data in some way that facilitates the analytical process.

Transcription

Collecting data in focus groups, interviews, or other similar interactions produces raw video and audio recordings. This data can often be analyzed for contextual cues such as non-verbal interaction, facial expressions, and accents. However, most traditional analyses of interview and focus group data benefit from converting participants’ words into text.

Recordings are typically transcribed so that the text can be systematically analyzed and incorporated into research papers or presentations. Transcription can be a tedious task, especially if a researcher has to deal with hours of audio data. These days, researchers can often choose between manually transcribing their raw data or using automated transcription services to greatly speed up this process.

Survey data

In online survey platforms, participant responses to closed-ended questions can be easily aggregated in a spreadsheet. Responses to any open-ended questions can also be included in a spreadsheet or saved as separate files for subsequent analysis of the text participants wrote. Since survey data is relatively structured, it tends to be quicker and easier to organize than other forms of qualitative data that are more unstructured, such as interviews or observations.

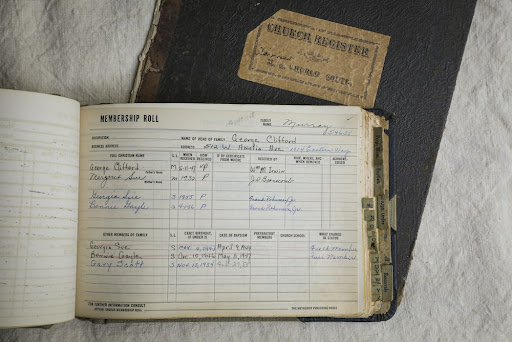

Field notes and artifacts

In ethnographic research or research involving direct observation, gathering data often means writing notes or taking photographs during field work. While field notes can be typed into a document for data analysis, the researcher can also scan their notes into an image or a PDF for later organization.

This degree of flexibility allows researchers to code all forms of data that aren't textual in nature but can still provide useful data points for analysis and theoretical development.

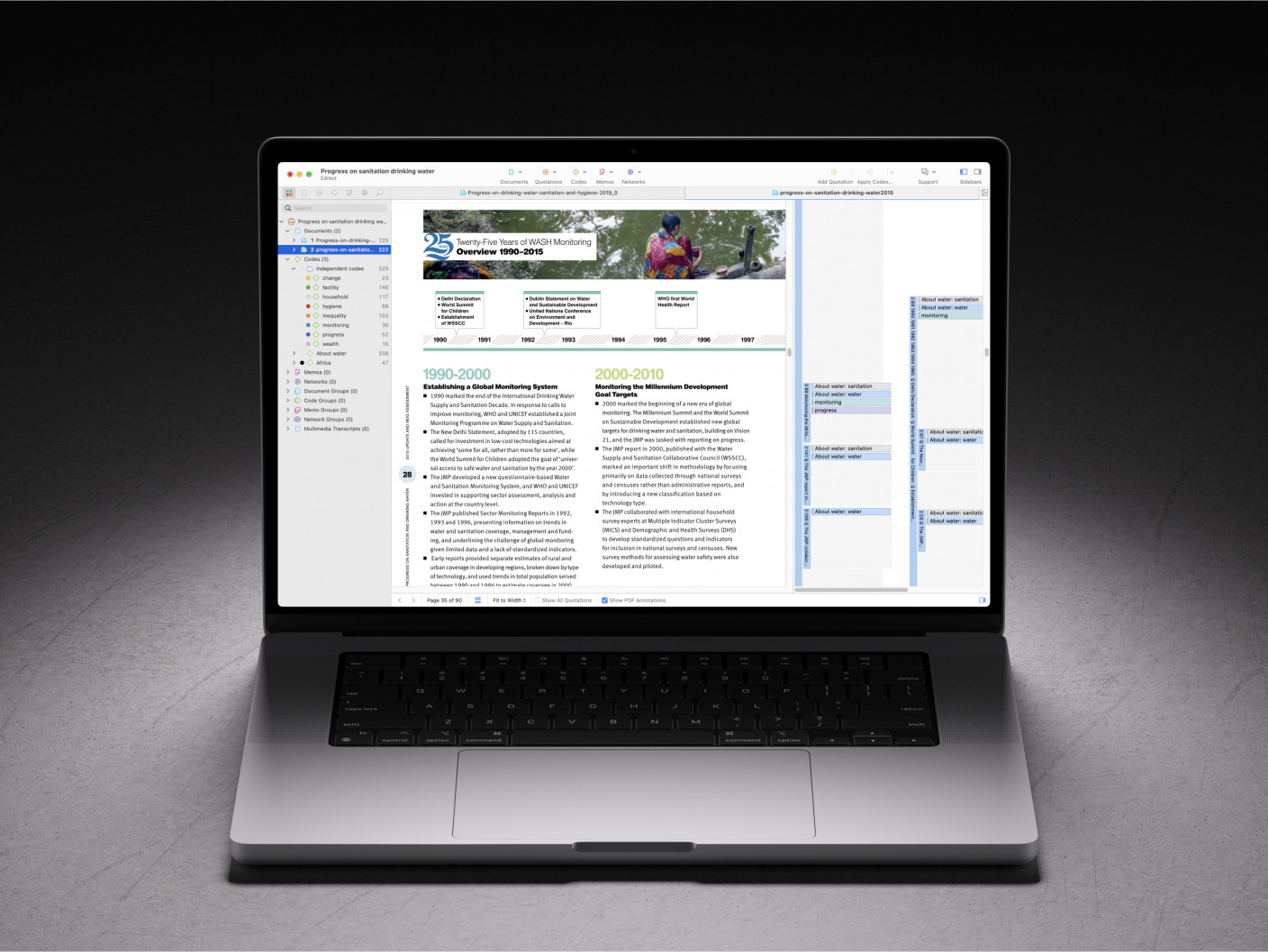

Coding

Coding is among the most fundamental skills in qualitative research, because coding is how researchers can effectively reduce large datasets into a series of compact codes for later analysis. If you are dealing with dozens or hundreds of pages of qualitative data, then applying codes to your data is a key method for condensing, synthesizing, and understanding the data.